[May 2026] Upcoming Compute Chipset Product Management Intern at Qualcomm. I will be in San Diego, California this summer!

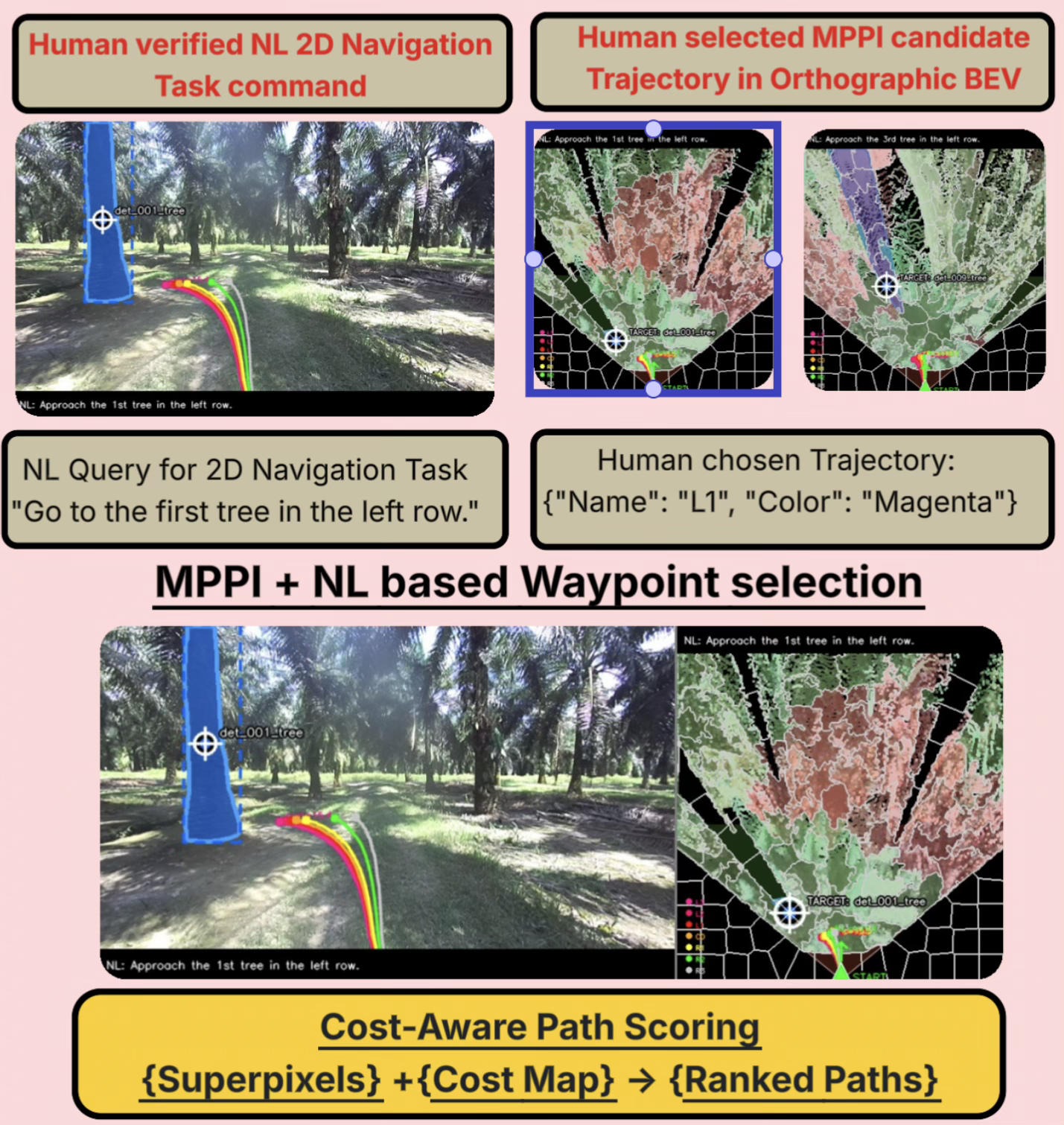

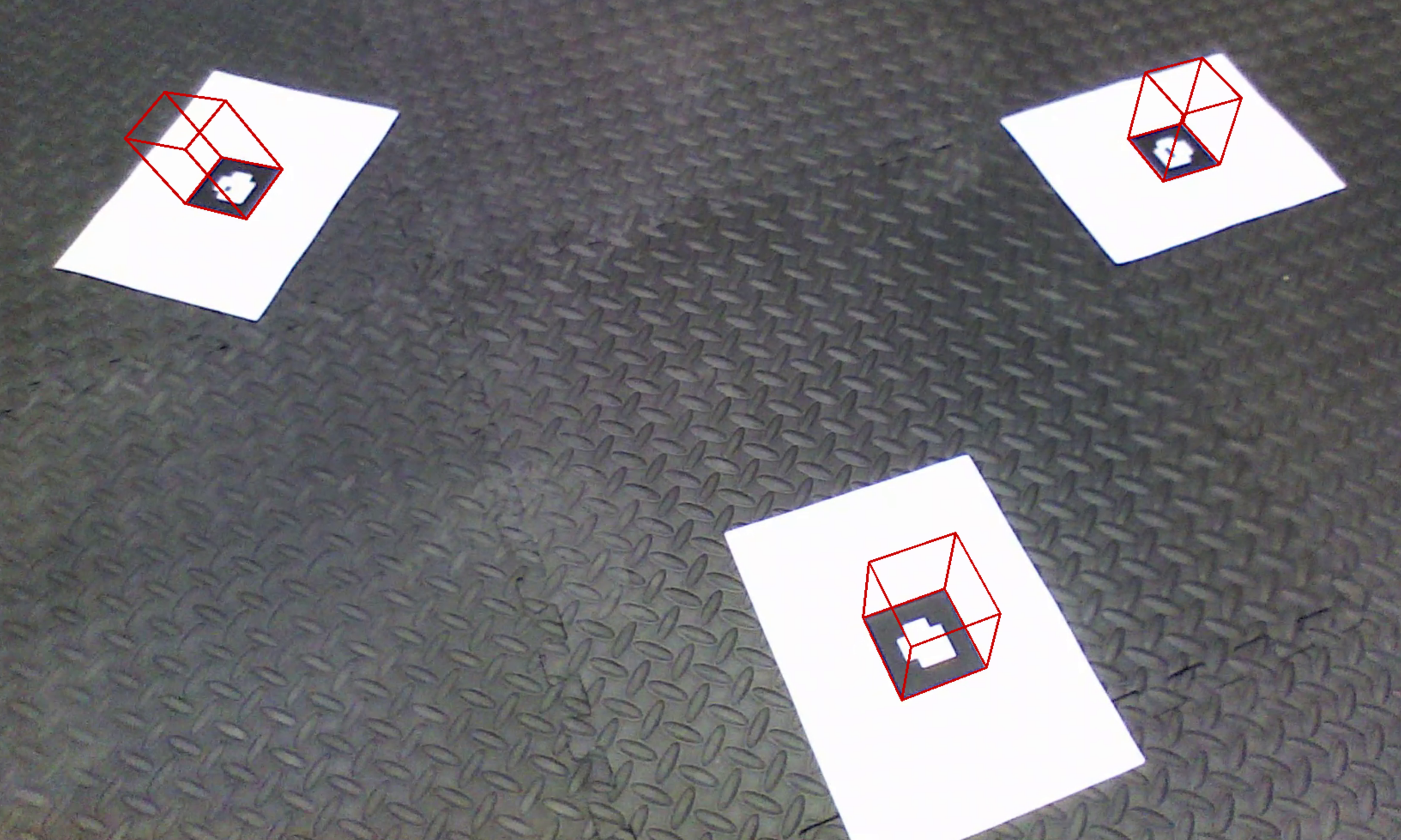

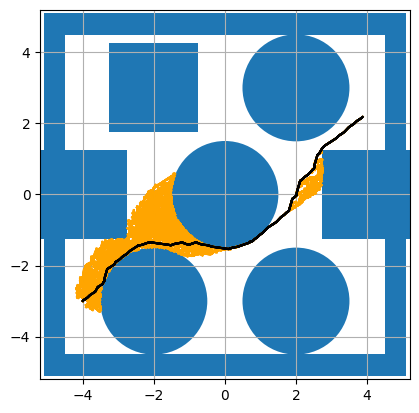

[Apr 2026] Our poster WaypointGen: Natural Language to 2D Navigation Waypoints Generation using VLMs was accepted at the CSL Student Conference (CSL SC 2026) at UIUC. [poster PDF]

[Mar 2026] Began preparing WaypointGen++ for submission to IEEE Robotics and Automation Letters (RA-L), extending our VLM-based waypoint generation pipeline to outdoor mobile manipulators.

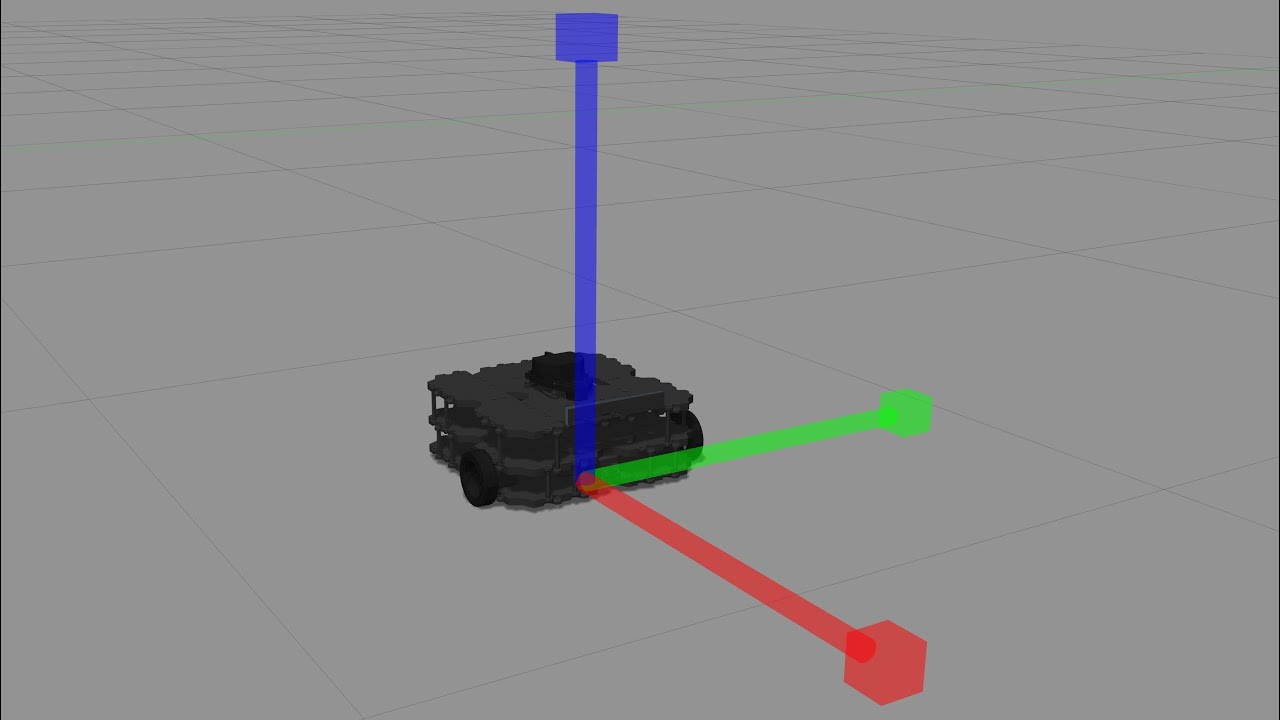

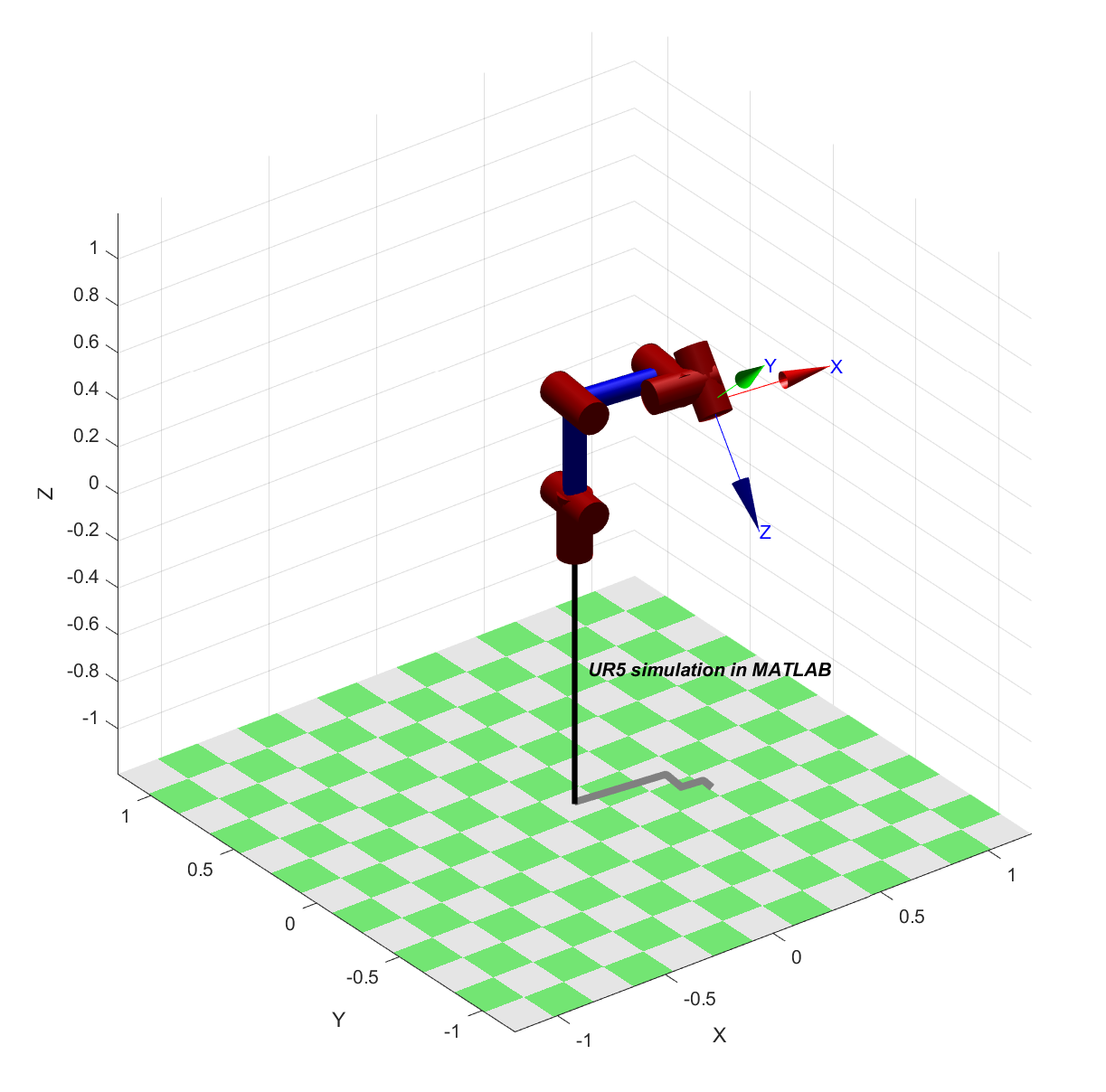

[Oct 2025] Released SLAM-ing Mars, a two-part Mobile Manipulator Exploration & SLAM challenge for CS498GC Fall 2025: students operated a Husky + UR3 + Robotiq gripper in a Mars Gazebo world. Part 1 (25 pts) covered robot setup and navigation; Part 2 (75 pts) extended to full SLAM with MoveIt 2 + RViz 2 waypoint following, 3D and 4D reconstruction of Thanksgiving-themed dynamic turkeys, and an optional Mars dust-storm bonus.

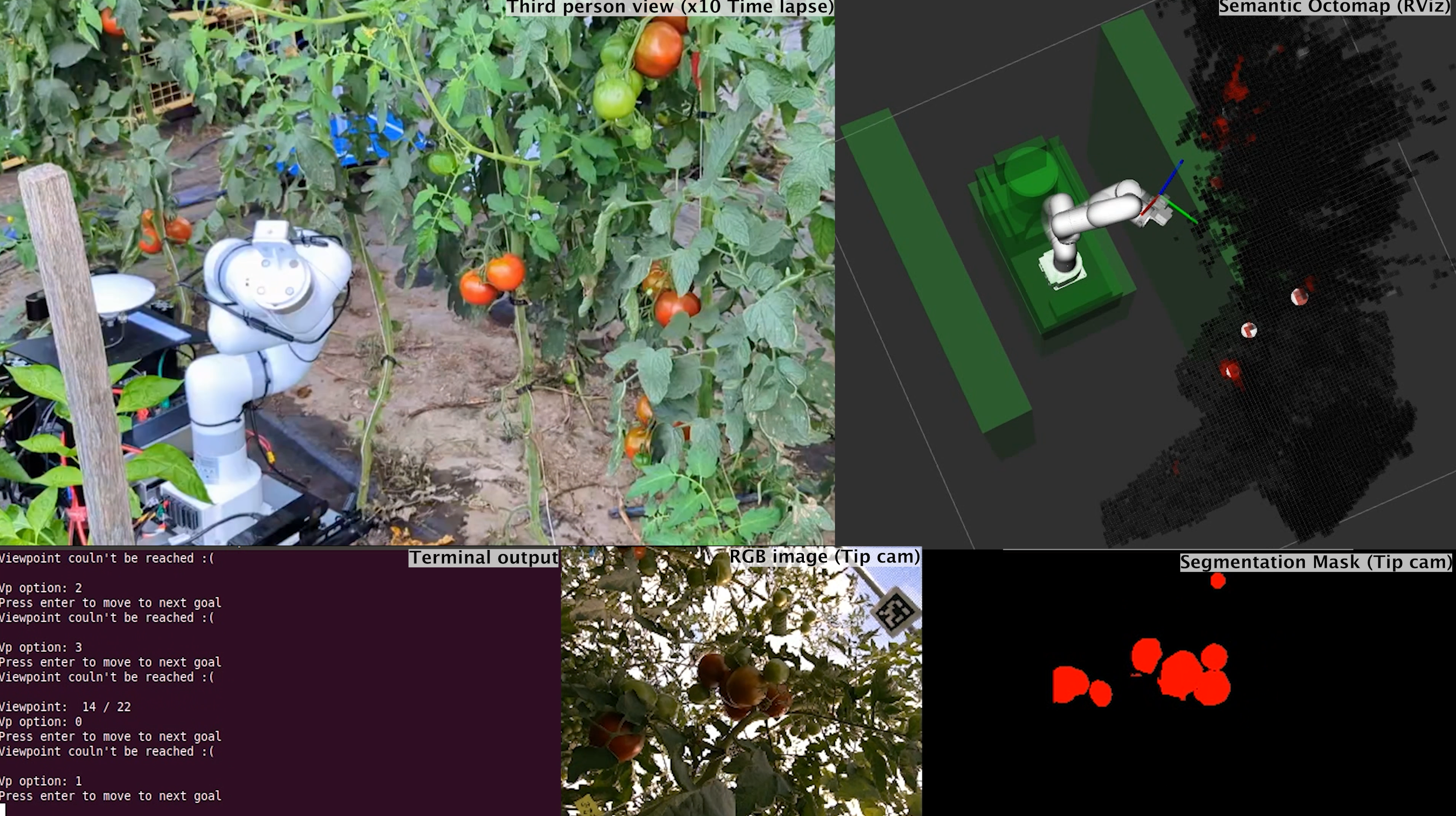

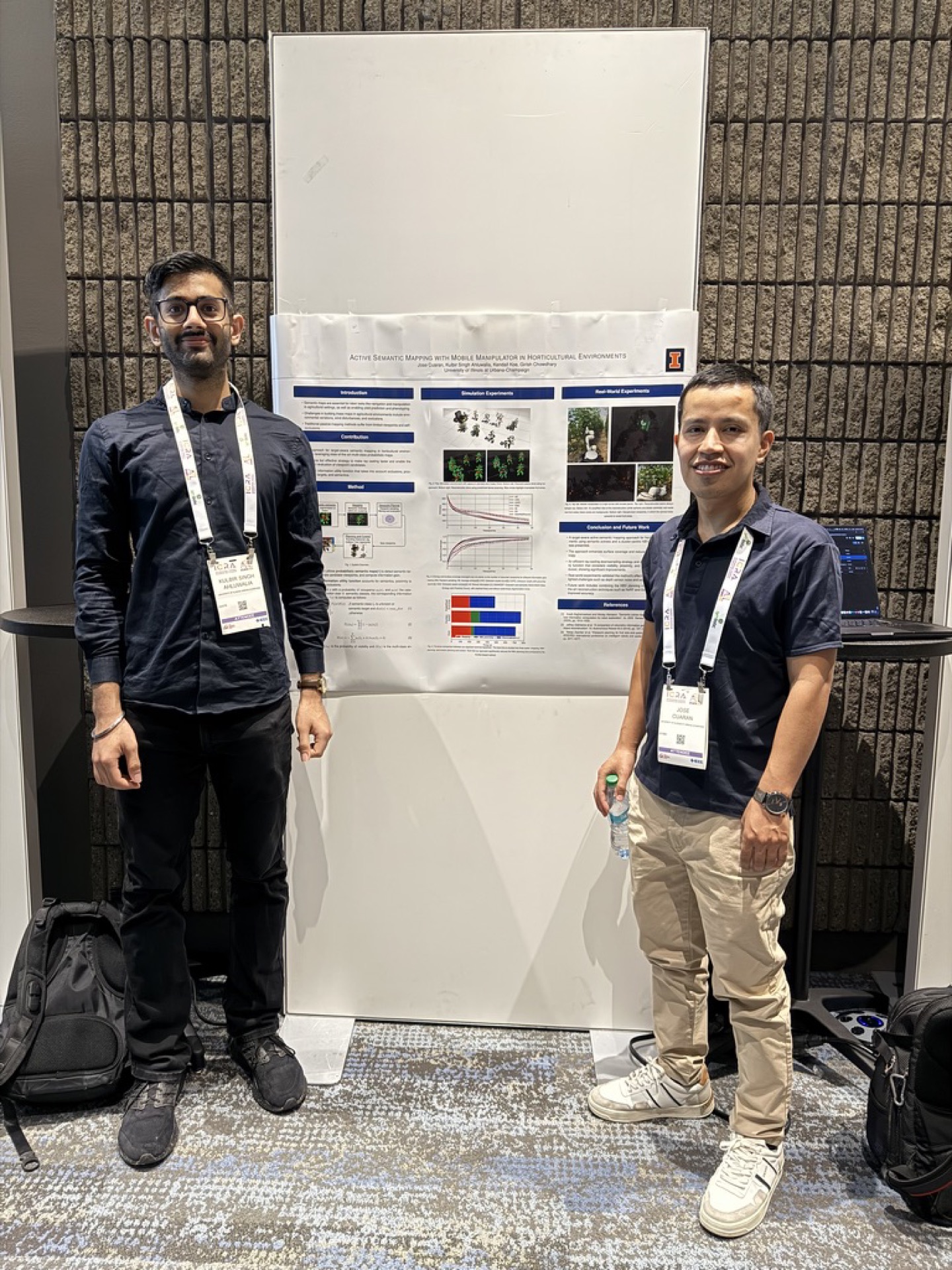

[May 2025] Our paper Active Semantic Mapping with Mobile Manipulator in Horticultural Environments was presented at ICRA 2025. arXiv:2412.10515 · project page

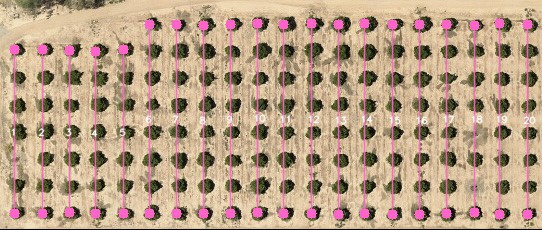

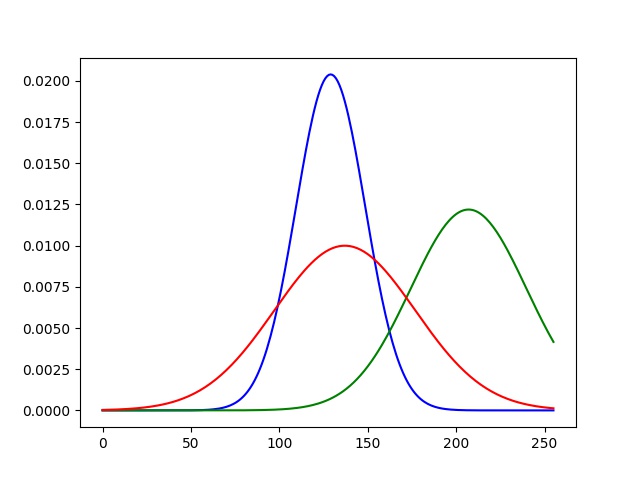

[May 2025] Joined Earthsense Inc. as an AI Intern for Summer 2025 in Urbana, IL, deploying VLMs (Molmo-7B, Gemma-3-27B, Qwen-2.5-VL-72B, Llama4-Scout, SpatialVLM) for natural-language-conditioned navigation on outdoor agricultural robots.

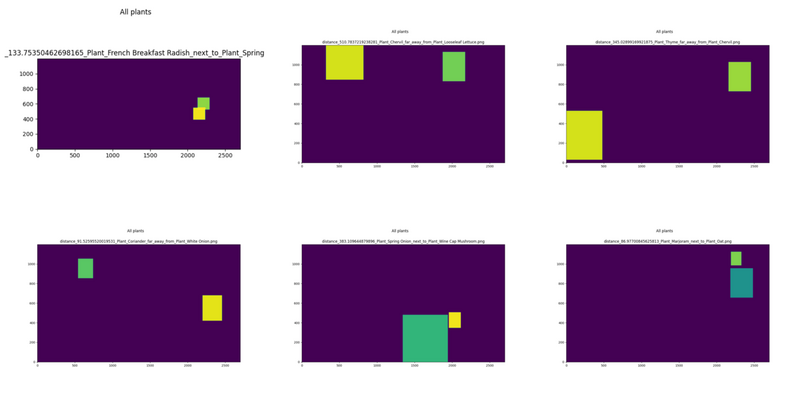

[Fall 2023] Presented the poster Plant Placement using Natural Language Grounding at the AIFARMS Annual Conference. [poster PDF]

[Aug 2022] Started my Ph.D. in Computer Science at UIUC, advised by Prof. Girish Chowdhary (DASLAB) and Prof. Julia Hockenmaier (HMR Lab).